Machine Learning Operations

Operate reliable AI solutions with MLOps

Bringing your machine learning and AI solutions into production in a sustainable and scalable way comes with a wide range of challenges, we help you overcome them. With a strong focus on engineering, processes, and culture, our MLOps approach ensures that your ML initiatives deliver long-term value and operate reliably over time.

With MLOps you unlock sustainable business value from your AI initiatives.

Developing a machine learning model is one thing. Operating it in a reliable, scalable, and maintainable manner in a production environment is an entirely different challenge. In many organizations, models are created by data scientists and handed over to IT infrastructure teams without further education or instruction. This often leads to friction, errors, data drifts, and models gradually becoming outdated without anyone noticing.

Our MLOps services follow a structured approach to address these challenges. It defines how models are built, tested, deployed, monitored, and updated, through repeatable and automated processes.

Our MLOps Service Offering

Infrastructure

We establish the technical environment in which models are trained, versioned, and deployed. This can include cloud-based platforms as well as on-premises infrastructure.

Pipeline Automation

We design automated workflows that prepare data, train and evaluate models, and deploy them to production, without requiring manual intervention for each iteration.

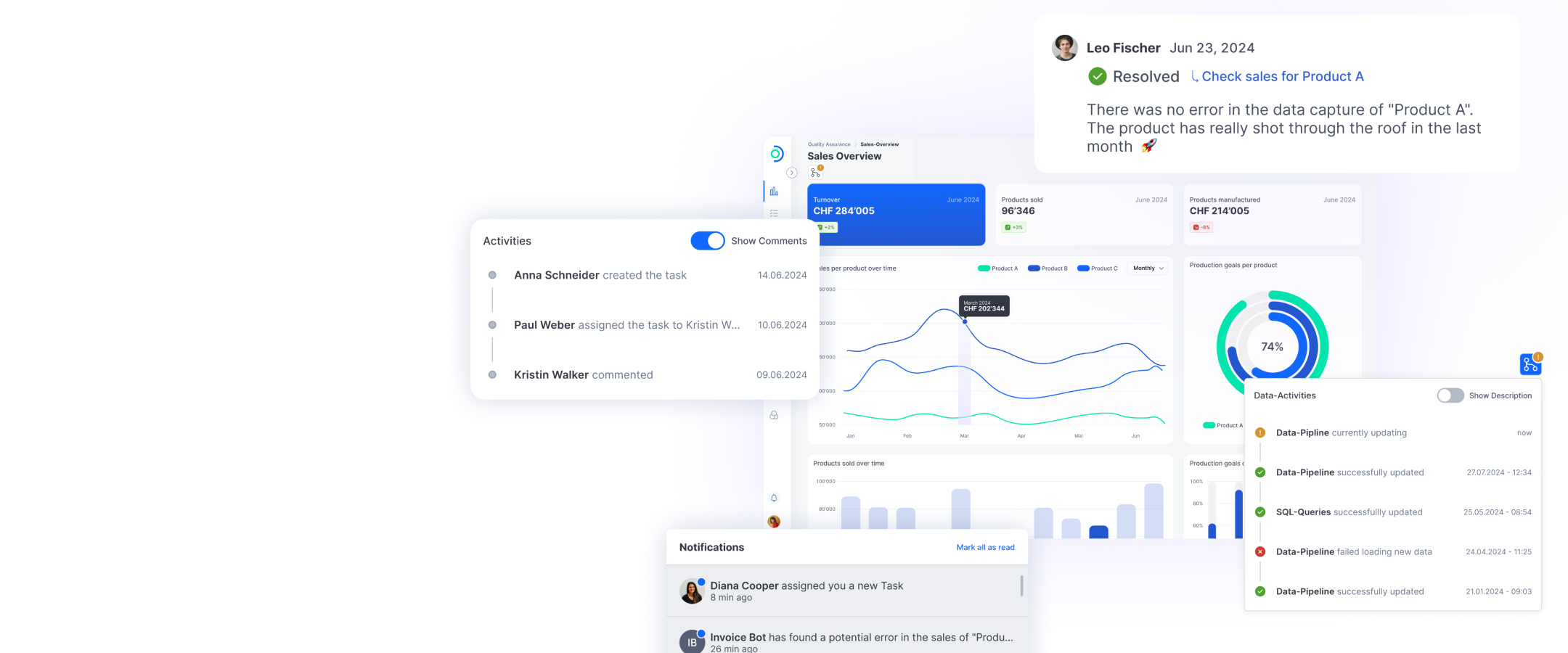

Monitoring

We implement systems that continuously verify whether a model is still performing as expected—for example, by detecting changes in input data (data drift) or identifying declines in prediction quality.

Retraining Processes

We automate the decision process leading to when and how a model should be retrained once its performance begins to decline.

Discover our approaches to building, operating, and integrating machine learning systems and LLMs.

By leveraging MLOps, you gain:

#1

Accelerated project ROI

Significantly shorten the time from development to production, enabling faster return on investment.

#2

Reliable and scalable systems

Reduce downtime and ensure your AI systems are equipped to meet the complex demands of daily business operations.

#3

Enhanced control and compliance

Respond proactively to regulatory requirements, such as the EU AI Act, and turn compliance into a competitive advantage.

#4

Increased team productivity

Standardized processes and improved collaboration reduce manual work and improve unstable environments, allowing data science teams to focus on delivering models and shipping solutions faster.

ti&m combines Swissness, technical excellence, and human insight to successfully implement MLOps in your organization

Extensive experience

Technical expertise with a collaborative approach

Swissness

Reliability, precision, and tailored solutions

Collaboration at eye level

A true partnership with your team

Head of AI & Digital Solutions

Lisa Kondratieva

Get in touch and start scaling your AI and ML initiatives today.